Enhanced Chat GPT Detection

Detect and prevent inappropriate content, spam, or harmful language in chat using advanced GPT detection technology.

AI-Powered Detection Benefits

Instant Detection

Identify and address inappropriate content in conversations immediately, ensuring a safe and respectful chat environment.

Real-time Alerts

Receive instant notifications about potential harmful language or spam, enabling proactive moderation and intervention.

Effortless Monitoring

Easily oversee and manage chat interactions, streamlining the process of ensuring compliance and safety.

Advanced Chat GPT Detection: Benefits and Features

Enhanced Accuracy

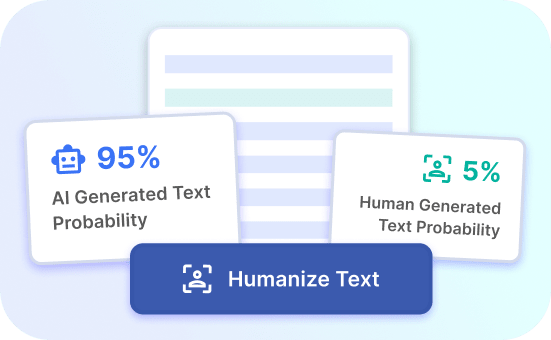

The advanced chat GPT detection tool offers enhanced accuracy in identifying and flagging GPT-generated content. By leveraging advanced algorithms and machine learning, it can effectively differentiate between human and AI-generated conversations. This level of precision ensures that users can confidently rely on the tool to maintain authentic interactions.

With its enhanced accuracy, the chat GPT detection tool provides businesses and online platforms with the ability to effectively monitor and control the proliferation of AI-generated content. This is crucial in maintaining the integrity and trustworthiness of online interactions, thereby safeguarding against potential misuse or deceptive practices.

Try Justdone ->

Real-Time Detection

Real-time detection capabilities empower users to identify GPT-generated content as it occurs, enabling swift intervention and response. This feature is particularly valuable for platforms and communities aiming to proactively manage the impact of AI-generated conversations. By swiftly detecting and addressing such content, potential risks and unwanted influences can be mitigated in a timely manner.

The tool's real-time detection functionality ensures that users can stay ahead of the curve in identifying and addressing AI-generated content. This proactive approach is essential in maintaining the authenticity and trustworthiness of online conversations, contributing to a safer and more reliable digital environment.

Try Justdone ->

Customizable Alert System

The chat GPT detection tool offers a customizable alert system, allowing users to set specific parameters for flagging AI-generated content. This tailored approach empowers platforms and businesses to align the tool with their unique moderation and content management requirements, ensuring that it effectively serves their distinct needs.

By incorporating a customizable alert system, the tool provides flexibility and control in identifying GPT-generated content based on predefined criteria. This customizable nature enables users to adapt the tool to evolving patterns and emerging AI conversational tactics, bolstering the resilience of their content moderation strategies.

Try Justdone ->

Effective Strategies for Chat GPT Detection

Regular Algorithm Updates

Regularly updating the detection algorithm is crucial for staying ahead of evolving GPT conversational patterns. By continuously refining the algorithm based on emerging trends and tactics, the detection tool can maintain its efficacy in identifying AI-generated content.

Frequent algorithm updates are essential for adapting to the dynamic landscape of AI-generated conversations, ensuring that the detection tool remains effective in flagging the latest GPT-generated content.

Cross-Referencing User Behavior

Cross-referencing user behavior patterns with content interactions can enhance the accuracy of AI-generated content detection. By analyzing user engagement metrics alongside content characteristics, the tool can establish more comprehensive criteria for identifying GPT-generated conversations.

Integrating user behavior analysis into the detection process provides valuable insights that complement content-based detection methods, enhancing the overall precision and reliability of the chat GPT detection tool.

Collaborative Moderation Approaches

Implementing collaborative moderation approaches involving both AI-based detection and human review can optimize the identification of GPT-generated content. By combining the strengths of automated detection with human judgment, platforms can achieve a balanced and effective content moderation framework.

Collaborative moderation approaches leverage the complementary capabilities of AI and human moderators, fostering a comprehensive and robust system for addressing AI-generated conversations.

Behavioral Pattern Analysis

Leveraging behavioral pattern analysis to identify anomalies in conversational dynamics can augment the detection of GPT-generated content. By scrutinizing conversational patterns and identifying deviations from typical user interactions, the tool can pinpoint potential instances of AI-generated conversations.

Incorporating behavioral pattern analysis enhances the tool's capacity to discern subtle variations indicative of GPT-generated content, contributing to a more nuanced and comprehensive detection approach.

Contextual Content Evaluation

Conducting contextual evaluations of content by considering conversational context and thematic relevance can refine the detection of AI-generated conversations. By assessing content within the context of ongoing conversations and thematic coherence, the tool can enhance its ability to distinguish between human and AI-generated interactions.

Contextual content evaluation enriches the detection process by incorporating contextual cues and thematic coherence as integral factors in identifying GPT-generated content, bolstering the overall accuracy of the tool.

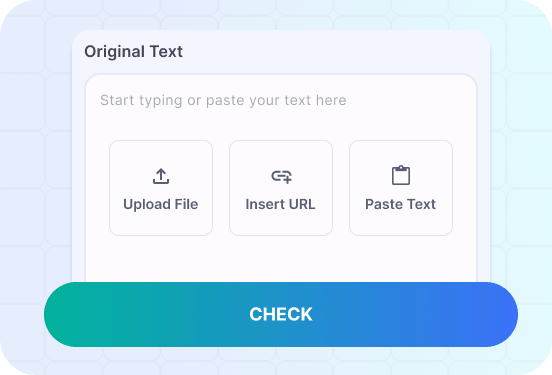

How to use AI Content Detector

- 1

Choose a template

Select the necessary template from the template gallery.

- 2

Provide more details

Fill out the carefully selected inputs to create the best quality of output content.

- 3

Enjoy the results

Copy, save for later, rate the output or hit regenerate button.

Real-Life Chat GPT Detection Scenarios

Explore real-life scenarios showcasing the efficacy of chat GPT detection in identifying AI-generated content and maintaining authentic conversational environments.

Craft a scenario where the chat GPT detection tool swiftly identifies AI-generated product reviews, preserving the platform's authenticity and consumer trust.

In a bustling e-commerce platform, the chat GPT detection tool swiftly identifies a surge of AI-generated product reviews flooding the platform. Leveraging its real-time detection capabilities, the tool promptly flags the suspicious reviews, preventing their detrimental impact on consumer trust and purchase decisions.

The platform's vigilant use of the chat GPT detection tool ensures that consumer confidence remains unshaken, safeguarding the authenticity and reliability of product evaluations. By promptly addressing the influx of AI-generated content, the platform upholds its commitment to providing genuine and trustworthy product feedback to consumers, fostering a transparent and credible marketplace environment.

The customizable alert system of the chat GPT detection tool allows the platform to tailor its detection parameters to specific review characteristics, enabling targeted identification of AI-generated content. This customizable approach empowers the platform to adapt its detection strategies in response to evolving conversational patterns, fortifying its defenses against deceptive content.

Through the collaborative efforts of AI-based detection and human review, the platform maintains a robust and balanced approach to content moderation. By integrating the strengths of automated detection with human discernment, the platform establishes a comprehensive system for preserving the authenticity of product reviews and ensuring consumer confidence in the platform's marketplace integrity.

Behavioral pattern analysis plays a pivotal role in identifying anomalous review interactions, enabling the tool to discern subtle deviations indicative of AI-generated content. By scrutinizing user engagement metrics and content interaction patterns, the tool effectively identifies and flags AI-generated product reviews, bolstering the platform's commitment to transparency and authenticity.

Contextual content evaluation enriches the detection process by considering the contextual relevance and thematic coherence of product reviews. Through meticulous contextual assessments, the tool accurately distinguishes between human-generated evaluations and AI-influenced content, reinforcing the platform's dedication to upholding genuine and credible product feedback.

Illustrate a scenario where the chat GPT detection tool combats the spread of AI-generated misinformation within a social media community, preserving the authenticity of user interactions and information sharing.

Amidst a vibrant social media community, the chat GPT detection tool combats the insidious spread of AI-generated misinformation. With its enhanced accuracy, the tool swiftly identifies and flags misleading AI-generated posts, curtailing the dissemination of deceptive content within the community.

The real-time detection capabilities of the tool enable proactive intervention, mitigating the impact of AI-generated misinformation by promptly alerting moderators and users to the presence of deceptive content. This proactive approach safeguards the authenticity of user interactions and information sharing, fostering a trustworthy and reliable community space.

Utilizing a customizable alert system, the social media platform tailors its detection parameters to effectively identify and address AI-generated misinformation. This tailored approach empowers the platform to adapt its detection strategies in response to evolving tactics, reinforcing its defenses against the proliferation of deceptive content.

By integrating collaborative moderation approaches, the platform leverages the strengths of AI-based detection and human review to maintain a balanced and comprehensive content moderation framework. This collaborative synergy ensures that the community remains resilient against AI-generated misinformation, preserving the integrity of user interactions and content authenticity.

The tool's behavioral pattern analysis scrutinizes conversational dynamics, enabling the identification of anomalous content indicative of AI-generated misinformation. Through meticulous analysis of user behavior and content interactions, the tool effectively discerns and addresses misleading posts, fortifying the community's commitment to combating deceptive information.

Contextual content evaluation plays a pivotal role in distinguishing between genuine user interactions and AI-influenced misinformation. By evaluating content within the context of ongoing discussions and thematic coherence, the tool upholds the authenticity and reliability of user contributions, reinforcing the community's dedication to transparent and credible information sharing.